This is a long post. It analyzes a paper that recently appeared in Nature. It’s not highly technical but does get into some important analytical subtleties. I often don’t know where to start (or stop) with the critiques of science papers, or what good it will do anyway. But nobody ever really knows what good any given action will do, so here goes. The study topic involves climate change, but climate change is not the focus of either the study or this post. The issues are, rather, mainly ecological and statistical, set in a climate change situation. The study illustrates some serious, and diverse problems.

Before I get to it, a few points:

- The job of scientists, and science publishers, is to advance knowledge in a field

- The highest profile journals cover the widest range of topics. This gives them the largest and most varied readerships, and accordingly, the greatest responsibilities for getting things right, and for publishing things of the highest importance

- I criticize things because of the enormous deficit of critical commentary from scientists on published material, and the failures of peer review. The degree to which the scientific enterprise as a whole just ignores this issue is a very serious indictment upon it

- I do it here because I’ve already been down the road–twice in two high profile journals–of doing it through journals’ established procedures (i.e. the peer-reviewed “comment”); the investment of time and energy, given the returns, is just not worth it. I’m not wasting any more of my already limited time and energy playing by rules that don’t appear to me designed to actually resolve serious problems. Life, in the end, boils down to determining who you can and cannot trust and acting accordingly

For those without access to the paper, here are the basics. It’s a transplant study, in which perennial plants are transplanted into new environments to see how they’ll perform. Such studies have, at least, a 100 year history, dating to genetic studies by Bateson, the Carnegie Institute, and others. In this case, the authors focused on four forbs (broad leaved, non-woody plants), occurring in mid-elevation mountain meadows in the Swiss Alps. They wanted to explore the effects of new plant community compositions and T change, alone and together, on three fitness indicators: survival rate, biomass, and fraction flowering. They attempted to simulate having either (1) entire plant communities, or (2) just the four target species, experience sudden temperature (T) increases, by moving them downslope 600 meters. [Of course, a real T change in a montane environment would move responsive taxa up slope, not down.] More specifically, they wanted to know whether competition with new plant taxa–in a new community assemblage–would make any observed effects of T increases worse, relative to those experienced under competition with species they currently co-occur with.

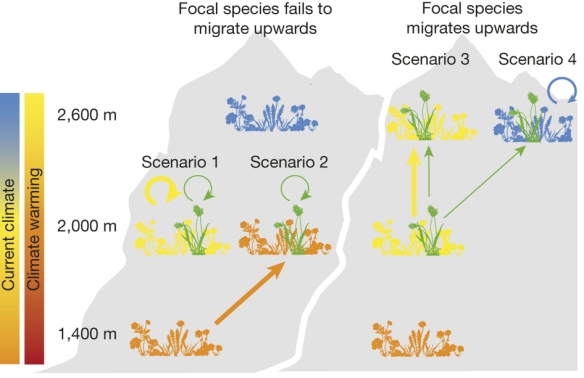

Their Figure 1 illustrates the strategy:

Figure 1: Scenarios for the competition experienced by a focal alpine plant following climate warming.

If the focal plant species (green) fails to migrate, it competes either with its current community (yellow) that also fails to migrate (scenario 1) or, at the other extreme, with a novel community (orange) that has migrated upwards from lower elevation (scenario 2). If the focal species migrates upwards to track climate, it competes either with its current community that has also migrated (scenario 3) or, at the other extreme, with a novel community (blue) that has persisted (scenario 4).

There are two ways to create new plant communities involving the four target taxa: (1) by having the four be static (not migrating upslope with T changes) while the rest of their original community does move up, and which in turn is replaced by other taxa from lower elevations, or (2) allowing the target taxa to move upslope (thus, responding to T change), leaving their original plant community behind and moving into a new one, one which is not responsive to T increases. To conduct such a study, one would ideally create these new communities by transplantation, seed sowing, etc. and then wait for the climate to change and observe the results. But that’s unfeasible due to the time required, so instead they created instant T changes by transplantation downslope (and, as a control for the effects of the horticultural disturbance/shock involved, also at the original elevations).

The 2000 m (~ 6500 ft) elevation community was the focal point, all four species occurring there naturally, but not 600 m below or above it (all on SE facing slopes). The transplantation process involved transplanting individuals of the four taxa into a number of intact, small meadow sods (each about 0.5 m^2), the sods containing taxa that they either did, or did not, currently co-occur with, as just described. These sods were then replanted at either their original elevation or downslope 600 m, depending on the treatment specified. Make sense?

They measured the temperatures at the three elevations involved, and indeed, they differed along the lines expected:

I am all for creative thinking when it comes to experimental design, so the authors deserve credit in this regard. But such thinking must be very well considered and capable of addressing the question of interest, and there are several problems on that score here.

First, it should be obvious that any ability to generalize from the results of this study is going to be extremely limited. Indeed, this is almost always a major limitation to manipulative ecology experiments. The response of four forb species limited to a 1200 m mid-elevational band, on SE-facing slopes in a small part of the Swiss Alps, is just not going to scale up to any generalization regarding species responses to T change. These are just four random taxa, for none of which do we know their ecological importance, since the authors don’t address it. We do know that they are from montane meadow communities, and can thus fairly wonder why some monocots (grasses, sedges, rushes), which are almost always the dominant life form in a meadow vegetation type, were not instead the focus of the study, or at least included.

There is no inherent problem, per se, with either a geographically or taxonomically limited study scope; one can find many, many such studies in discipline- or geography-specific journals. But Nature isn’t one of those journals–it is instead one of the big players, a multi-discipline “glamour journal”, arguably the type case in fact. One supposedly publishes in Nature only if one has results of broad, important impact, a fact that Nature has had no problem making clear to everyone. So…a study like this had better provide some definite evidence of an important, general finding with respect to expected plant species and/or community responses to temperature change, and preferably with a theoretical basis.

But it doesn’t–it doesn’t even come remotely close.

The reason for this is that there are problems with the study design, and also with the statistical analysis of the results. I can’t even get into all the design problems without making this post considerably longer than it is, but there are several. The length of the experiment is arguably the most obvious one. It was a two year study, and moreover, for two of their three response variables, data from only the second year was used.

The basic finding reported by the authors is that competition from other taxa, in newly composed plant communities undergoing temperature increase, has a larger negative effect on the target species than does either (1) community change without temperature change, or (2) temperature change without community change. The principal result is given in their Figure 2 (below); in what follows I focus just on panels (a) and (b) of it (survival rates), because survival (along with reproduction) rates are the bottom line when assessing population and community dynamics.

Figure 2: Effect of novel competitors on alpine plant performance.

Survival over 2 years (a, b), second year biomass (c, d) and second year flowering (e, f) of focal species exposed to different potential competition scenarios following climate warming (see Table 1). Shown are means (s.e.m.) of the raw data. When the novel competitor × site × species interaction was significant (a–d), P values for species-by-site specific contrasts were taken from the full model (see Table 2 for statistics and n), else from site-specific contrasts averaging over species (e, f); P values <0.005 remain significant (α = 0.05) after Holm–Bonferroni correction for multiple comparisons.

Here’s the statistical/analytical problem, and it’s not obvious. In fact, I found it quite hard to explain it well when writing.

In both (a) and (b) above, the yellow bars give the fractional survival of the four taxa when competing only against their own original community: (a) when moved down 600 m (to 1400m), and (b) at their original elevation (2000 m). In each case, they are reference points against which to compare the effects of new/different competitors. Eyeballing panel (b) we see that three of the species had roughly an 80 to 90 percent survival rate, and the fourth one about 60 percent. This is the rate of surviving the transplantation process alone, since these individuals did not change elevation. The yellow bars from panel (a) also give results from competing against the original community, but at a higher temperature (2.6 deg C, mean), since the community’s been moved downslope 600 m. The survival rate for three of the four taxa is now considerably lower, three ranging between about 30 and 70 percent, and the fourth (P. atrata) higher, around 95. But this result is not an important finding, as it’s simply another confirmation of what geneticists have repeatedly demonstrated in many reciprocal transplant experiments on many species over many years: plant species are under strong selective forces that adapt them to their local environments, temperature in particular.

But the main point here is not that, but rather the following.

Compare the results from those two situations with those in which the four taxa are instead competing against a new set of competitors, which are shown by the orange bars in panel (a) and the blue ones in (b) (the colors represent the three elevations from which the three plant communities in the study were taken). Panel (b) gives the results from competitors brought down from 2600 m to 2000 m to compete with the four target taxa, which originated in, and thus are presumably adapted to, the temperature at 2000 m. So, of course, we expect that the target taxa are then going to out-compete the immigrants from higher elevation, and indeed, comparison of the yellow and blue bars in (b) shows that the survival rate for three of them increase, from 80-90% to around 100%, and for the fourth (P.vernalis), from about 60% to 80%. So the ceiling of possible improvement in survival rate is limited by the fact that they already survive at a high rate: if you’re already surviving at a 60-90 percent clip, then the highest improvement you can make when your competitive environment becomes less competitive, is 100 – (60 to 90) = 10 to 40 percent, since 100% is an absolute ceiling.

By contrast, in panel (a), where the target taxa have experienced increased temperatures (= transplanted downslope), this is not the case. In this situation, when the target taxa grow with new competitors, it is now the targets (and their original community) that experience the T increase, while their new competitors (orange bars) do not–they grew at that elevation to begin with. Here, the yellow bars show the effects of the temperature changes on survival, and the closer they are to 100 percent, the greater is the potential that new competitors from a new community (orange bars) will have some detectable effect on survival rate. And we strongly suspect that they will indeed have just such an effect, because they are very likely adapted to the thermal regime at that elevation. The competitive environment, in this case, now gets worse for the targets when exposed to new competitors, not better as it does in panel (b). The survival rates within the original community (panel a, yellow) now range from about 25 to 95 percent, and so you’ve got exactly that much room for an effect to be observed…which is a lot higher than the 10 to 40 percent in panel (b).

The point of all this is that the comparisons performed are as apples to oranges: there’s a much larger “potential effect space” for the effect of a new community on survival to be realized, in situation (a) than in (b). But their statistical tests just evaluate changes using the raw survival rates, that is, they compared the observed differences between the yellow and orange bars in panel (a) with those between the yellow and blue bars in panel (b). Being statistically significant for 3/4 of the species in the first case, but for none of them in the second, they conclude that community composition effects on survival rate are more important for species that don’t migrate with temperature increases, than for those that do.

This conclusion–what does it even mean? They are testing the same suite of target species in each treatment: they’re going to respond one way or the other. The authors didn’t actually test what happens to one group of species that responds to T increases by moving upslope, and some other group that doesn’t, much less the effect of their original communities moving, or not, along with them. They couldn’t, they don’t know which species are going to do what in response to T change–they had to try to mimic what would happen but you can’t really do so properly to address the question they address. We don’t even know what these four taxa will do under a T increase, let alone if or how this generalizes to something larger. It’s statistically invalid, smoke and mirrors.

There are several other problems I could get into as well, but don’t have the time for. For one, their conclusion of no effect of new competitors on survival rate when species respond to temperature changes, would be suspect if one were to aggregate the data over all four species, instead of testing them one at a time and reporting the significance levels, as the authors did: the survival rate (Fig. 2b) is in fact higher, for each species, when grown with new competitors. But the data needed to do so are not provided. We also don’t know how much taxonomic difference/similarity there was between the three community types that were tested–again no data on this presented. Another big question, aside from the monocot/dicot issue already mentioned, is why they would pick four taxa of such limited elevational range instead of more widely ranging taxa, ones more likely to be more genetically variable and thus to respond to climatic and competitive changes differently than these four. And there’s the very big issue of gradual versus instant T change; we expect that former, not the latter. And arguably largest of all, the positively enormous issue that survival rates in the absence of reproductive rates are essentially useless when it comes to predicting the community dynamics in any ecosystem.

So, this study in this journal is a major problem. If it were presented as a new, general methodological approach to be followed, that would be one thing, but it isn’t and if it were it wouldn’t be here because that’s not what Nature publishes. It doesn’t contain the methodological detail (biological or statistical) and discussion of issues and pluses/minuses etc., that would be needed for such. Yes, it’s about 110 percent certain, as they state, that the effects of climatic changes on community structure and function will indeed depend on specifics of the communities in question–and a bunch more besides those. We already know that from a thousand lines of evidence over 100+ years, and this study doesn’t really contribute anything new towards that conclusion.

I don’t think this paper, as is, should even be published in an ecology or plant biology journal. So how/why did it get published in Nature? We’re not likely to get an answer to that question, but of what value would it likely be anyway, given what we can determine ourselves from careful reading and thought? I’m pretty sure I know the answer, but I’m not going to get further into it right now. I’m tired and depressed from reading yet another bad paper in Nature that a whole bunch of people will likely glance at, maybe read the abstract from, and assume is valid. That’s how the thing works.

Wow great commentary.

Thanks, I hope it was follow-able.

“First, it should be obvious that any ability to generalize from the results of this study is going to be extremely limited. Indeed, this is almost always a major limitation to manipulative ecology experiments.”

Boy howdy. I attempt to grow all sorts of mostly US native plants in my rock garden from the alpine to the desert (a luxury of living near Seattle). I put a plastic cover over the dryland ones in winter but otherwise it is what rock gardeners derisively call an “open garden.” Some species just flat won’t grow. Some will establish well and flower but then fade, or fade and appear healthy elsewhere by seed or rhizome. Some dryland species absolutely thrive, and rather than a brief flush of bloom in spring as they do in their native habitat, they bloom over months. I am able to grow Talinum spinescens, a rare plant found only in cracks in basalt pavement in scabland coulees. It loves my rock garden! It’s nearby relative, Talinum okanaganense, grows on a variety of substrates in shrub steppe and forest margins, but a few attempts to grow it have all resulted in quick failure. So yeah, its a complicated world out there.

Great stuff Matt. And those are just the surprises that come without the added complications of community interactions, where it really gets hairy. I used to spend time in the botanic gardens in Davis/SF/Berkeley and was always surprised at the stuff I’d see growing. Mountain hemlock for example (Tsuga mertensiana)–you won’t find it much below 10,000 ft in the Sierra/Cascade axis in CA, mostly well higher. Plants will continuously surprise one in all kinds of ways–they are genetically diverse and highly sensitive to several types of hormonal controls, particularly in their reproductive systems. We have light-years to go to be able to predict how they will collectively respond to non-equilibrium situations of various types.